10 results

(View BibTeX file of all listed publications)

2022

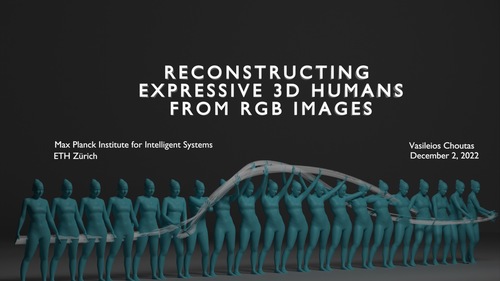

Reconstructing Expressive 3D Humans from RGB Images

ETH Zurich, Max Planck Institute for Intelligent Systems and ETH Zurich, December 2022 (thesis)

To interact with our environment, we need to adapt our body posture

and grasp objects with our hands. During a conversation our facial expressions

and hand gestures convey important non-verbal cues about our

emotional state and intentions towards our fellow speakers. Thus, modeling

and capturing 3D full-body shape and pose, hand articulation and facial

expressions are necessary to create realistic human avatars for augmented

and virtual reality. This is a complex task, due to the large number of

degrees of freedom for articulation, body shape variance, occlusions from

objects and self-occlusions from body parts, e.g. crossing our hands, and

subject appearance. The community has thus far relied on expensive and

cumbersome equipment, such as multi-view cameras or motion capture

markers, to capture the 3D human body. While this approach is effective,

it is limited to a small number of subjects and indoor scenarios. Using

monocular RGB cameras would greatly simplify the avatar creation process,

thanks to their lower cost and ease of use. These advantages come at a price

though, since RGB capture methods need to deal with occlusions, perspective

ambiguity and large variations in subject appearance, in addition to

all the challenges posed by full-body capture. In an attempt to simplify the

problem, researchers generally adopt a divide-and-conquer strategy, estimating

the body, face and hands with distinct methods using part-specific

datasets and benchmarks. However, the hands and face constrain the body

and vice-versa, e.g. the position of the wrist depends on the elbow, shoulder,

etc.; the divide-and-conquer approach can not utilize this constraint.

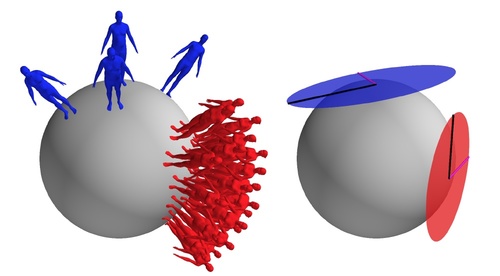

In this thesis, we aim to reconstruct the full 3D human body, using only

readily accessible monocular RGB images. In a first step, we introduce a

parametric 3D body model, called SMPL-X, that can represent full-body

shape and pose, hand articulation and facial expression. Next, we present

an iterative optimization method, named SMPLify-X, that fits SMPL-X to

2D image keypoints. While SMPLify-X can produce plausible results if

the 2D observations are sufficiently reliable, it is slow and susceptible

to initialization. To overcome these limitations, we introduce ExPose, a

neural network regressor, that predicts SMPL-X parameters from an image

using body-driven attention, i.e. by zooming in on the hands and face,

after predicting the body. From the zoomed-in part images, dedicated

part networks predict the hand and face parameters. ExPose combines

the independent body, hand, and face estimates by trusting them equally.

This approach though does not fully exploit the correlation between parts

and fails in the presence of challenges such as occlusion or motion blur.

Thus, we need a better mechanism to aggregate information from the full

body and part images. PIXIE uses neural networks called moderators that

learn to fuse information from these two image sets before predicting the

final part parameters. Overall, the addition of the hands and face leads to

noticeably more natural and expressive reconstructions.

Creating high fidelity avatars from RGB images requires accurate estimation

of 3D body shape. Although existing methods are effective at

predicting body pose, they struggle with body shape. We identify the lack

of proper training data as the cause. To overcome this obstacle, we propose

to collect internet images from fashion models websites, together with

anthropometric measurements. At the same time, we ask human annotators

to rate images and meshes according to a pre-defined set of linguistic attributes.

We then define mappings between measurements, linguistic shape

attributes and 3D body shape. Equipped with these mappings, we train a

neural network regressor, SHAPY, that predicts accurate 3D body shapes

from a single RGB image. We observe that existing 3D shape benchmarks

lack subject variety and/or ground-truth shape. Thus, we introduce a new

benchmark, Human Bodies in the Wild (HBW), which contains images of

humans and their corresponding 3D ground-truth body shape. SHAPY

shows how we can overcome the lack of in-the-wild images with 3D shape

annotations through easy-to-obtain anthropometric measurements and linguistic

shape attributes.

Regressors that estimate 3D model parameters are robust and accurate,

but often fail to tightly fit the observations. Optimization-based approaches

tightly fit the data, by minimizing an energy function composed of a data

term that penalizes deviations from the observations and priors that encode

our knowledge of the problem. Finding the balance between these terms

and implementing a performant version of the solver is a time-consuming

and non-trivial task. Machine-learned continuous optimizers combine the

benefits of both regression and optimization approaches. They learn the

priors directly from data, avoiding the need for hand-crafted heuristics and

loss term balancing, and benefit from optimized neural network frameworks

for fast inference. Inspired from the classic Levenberg-Marquardt

algorithm, we propose a neural optimizer that outperforms classic optimization,

regression and hybrid optimization-regression approaches. Our

proposed update rule uses a weighted combination of gradient descent

and a network-predicted update. To show the versatility of the proposed

method, we apply it on three other problems, namely full body estimation

from (i) 2D keypoints, (ii) head and hand location from a head-mounted

device and (iii) face tracking from dense 2D landmarks. Our method can

easily be applied to new model fitting problems and offers a competitive

alternative to well-tuned traditional model fitting pipelines, both in terms

of accuracy and speed.

To summarize, we propose a new and richer representation of the human

body, SMPL-X, that is able to jointly model the 3D human body pose

and shape, facial expressions and hand articulation. We propose methods,

SMPLify-X, ExPose and PIXIE that estimate SMPL-X parameters from

monocular RGB images, progressively improving the accuracy and realism

of the predictions. To further improve reconstruction fidelity, we demonstrate

how we can use easy-to-collect internet data and human annotations

to overcome the lack of 3D shape data and train a model, SHAPY, that

predicts accurate 3D body shape from a single RGB image. Finally, we

propose a flexible learnable update rule for parametric human model fitting

that outperforms both classic optimization and neural network approaches.

This approach is easily applicable to a variety of problems, unlocking new

applications in AR/VR scenarios.

2019

Scientific Report 2016 - 2018

2019 (mpi_year_book)

This report presents research done at the Max Planck Institute for Intelligent Systems from

January 2016 to December 2018. It is our third report since the founding of the institute in 2011.

This status report is organized as follows: we begin with an overview of the institute, including

its organizational structure (Chapter 1). The central part of the scientific report consists of chapters

on the research conducted by the institute’s departments (Chapters 2 to 5) and its independent

research groups (Chapters 6 to 18), as well as the work of the institute’s central scientific facilities

(Chapter 19). For entities founded after January 2016, the respective report sections cover work

done from the date of the establishment of the department, group, or facility.

2015

Proceedings of the 37th German Conference on Pattern Recognition

Gall, J., Gehler, P., Leibe, B.

Springer, German Conference on Pattern Recognition, October 2015 (proceedings)

2014

Model transport: towards scalable transfer learning on manifolds - supplemental material

Freifeld, O., Hauberg, S., Black, M. J.

(9), April 2014 (techreport)

This technical report is complementary to "Model Transport: Towards Scalable Transfer Learning on Manifolds" and contains proofs, explanation of the attached video (visualization of bases from the body shape experiments), and high-resolution images of select results of individual reconstructions from the shape experiments. It is identical to the supplemental mate- rial submitted to the Conference on Computer Vision and Pattern Recognition (CVPR 2014) on November 2013.

2013

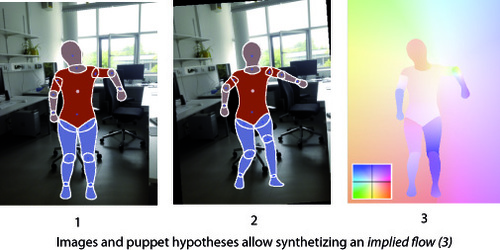

Puppet Flow

(7), Max Planck Institute for Intelligent Systems, October 2013 (techreport)

We introduce Puppet Flow (PF), a layered model describing the optical flow of a person in a video sequence. We consider video frames composed by two layers: a foreground layer corresponding to a person, and background.

We model the background as an affine flow field. The foreground layer, being a moving person, requires reasoning about the articulated nature of the human body. We thus represent the foreground layer with the Deformable Structures model (DS), a parametrized 2D part-based human body representation. We call the motion field defined through articulated motion and deformation of the DS model, a Puppet Flow. By exploiting the DS representation, Puppet Flow is a parametrized optical flow field, where parameters are the person's pose, gender and body shape.

A Quantitative Analysis of Current Practices in Optical Flow Estimation and the Principles Behind Them

Sun, D., Roth, S., Black, M. J.

(CS-10-03), Brown University, Department of Computer Science, January 2013 (techreport)

2012

Coregistration: Supplemental Material

(No. 4), Max Planck Institute for Intelligent Systems, October 2012 (techreport)

Lie Bodies: A Manifold Representation of 3D Human Shape. Supplemental Material

(No. 5), Max Planck Institute for Intelligent Systems, October 2012 (techreport)

MPI-Sintel Optical Flow Benchmark: Supplemental Material

(No. 6), Max Planck Institute for Intelligent Systems, October 2012 (techreport)

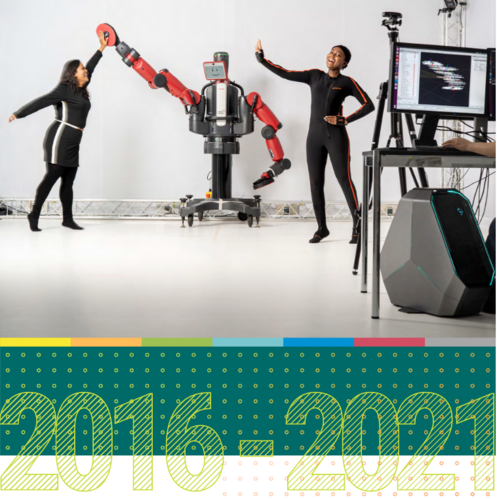

Scientific Report 2016 - 2021

(mpi_year_book)

This report presents research done at the Max Planck Institute for Intelligent Systems from January2016 to November 2021. It is our fourth report since the founding of the institute in 2011. Dueto the fact that the upcoming evaluation is an extended one, the report covers a longer reportingperiod.This scientific report is organized as follows: we begin with an overview of the institute, includingan outline of its structure, an introduction of our latest research departments, and a presentationof our main collaborative initiatives and activities (Chapter1). The central part of the scientificreport consists of chapters on the research conducted by the institute’s departments (Chapters2to6) and its independent research groups (Chapters7 to24), as well as the work of the institute’scentral scientific facilities (Chapter25). For entities founded after January 2016, the respectivereport sections cover work done from the date of the establishment of the department, group, orfacility. These chapters are followed by a summary of selected outreach activities and scientificevents hosted by the institute (Chapter26). The scientific publications of the featured departmentsand research groups published during the 6-year review period complete this scientific report.