Robot Perception Group Github Organization Page

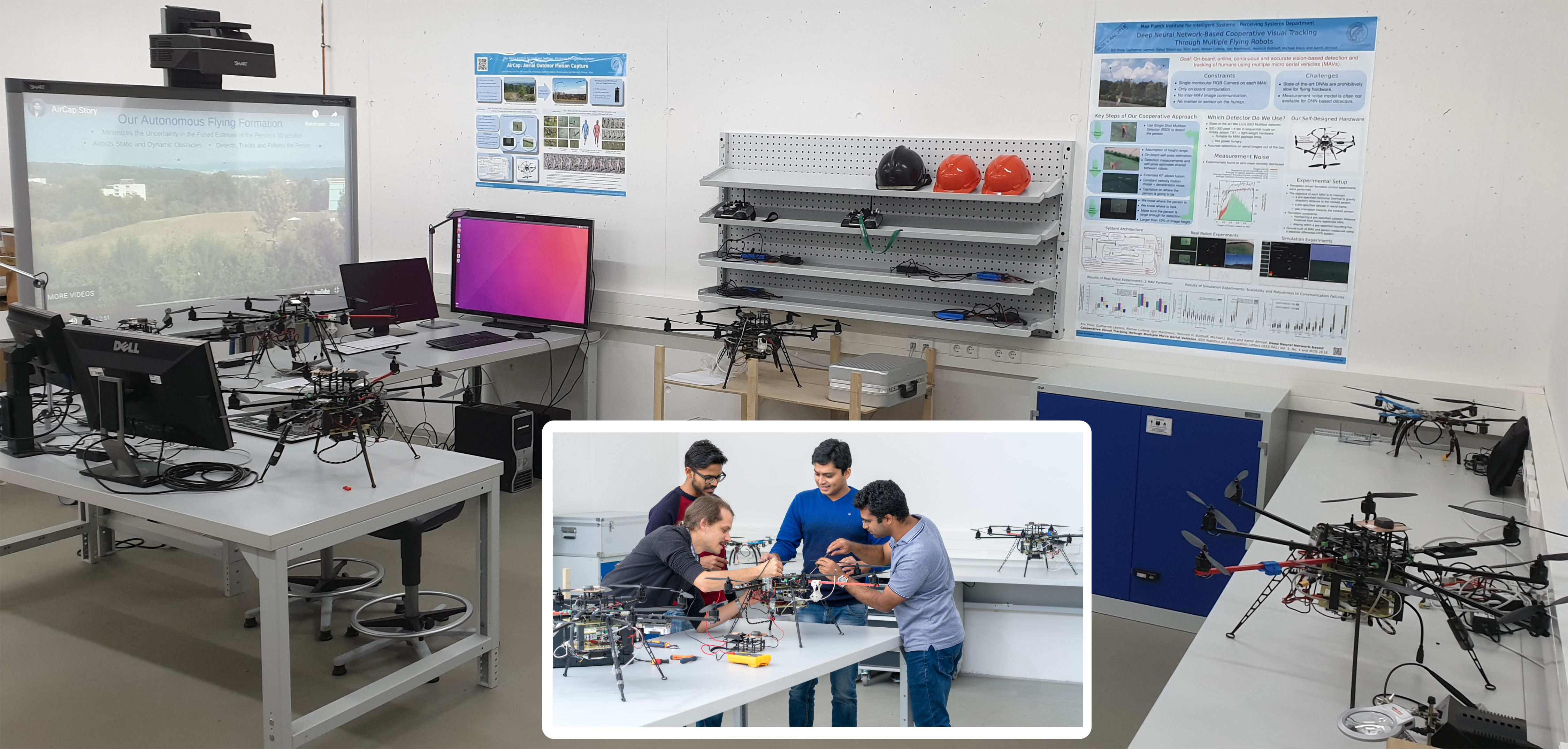

Our focus is on vision-based perception in multi-robot systems. Our goal is to understand how teams of robots, especially flying robots, can act (navigate, cooperate and communicate) in optimal ways using only raw sensor inputs, e.g., RGB images and IMU measurements. Thus, we are studying novel methods for active perception, sensor fusion and state estimation in multi-robot systems. To facilitate our research we also design and develop novel robot platforms.

Multi-robot Active Perception -- We investigate methods for multi-robot formation control based on cooperative target perception without relying on a pre-specified formation geometry. We have developed methods for teams of mobile aerial and ground robots, equipped with only RGB cameras, to maximize their joint perception of moving persons [ ] [ ] or objects in 3D space by actively steering the formations that facilitate the joint perception. We have introduced and rigorously tested active perception methods using novel detection and tracking pipelines [ ] and nonlinear model predictive control (MPC) based formation controller [ ] [ ], which forms our autonomous aerial motion capture (AirCap) system's front-end (AirCap Front-End).

Multi-view pose and shape estimation for Motion Capture (MoCap) -- For human MoCap, we develop methods to estimate 3D pose and shape using images from multiple and approximately-calibrated cameras. Such image datasets are obtained using our AirCap system's front-end running an active perception method. We leverage 2D joint detectors as noisy sensor measurements and jointly optimize for human pose, shape and the extrinsics of the cameras [ ] (AirCap Back-End).

Multi-robot Sensor Fusion -- We study and develop unified methods for sensor fusion that are not only scalable to large environments but also simultaneously to a large number of sensors and teams of robots [ ]. We have developed several methods for unified and integrated multi-robot cooperative localization and target tracking. Here "unified" means that the poses of all robots and targets are estimated by every other robot and "integrated" means that disagreement among sensors, inconsistent sensor measurements, occlusions and sensor failures, are handled within a single Bayesian framework. The methods are either filter-based (filtering) [ ] or pose-graph optimization-based approaches (smoothing). While each category has its own advantages w.r.t. the available computational resources and the level of estimation accuracy, we have also developed a novel moving-horizon technique [ ] for a hybrid method that combines the advantages of both kinds of approaches.

New Robot Platforms -- In order to have extensive access to the hardware, we design and build most of our robotic platforms. Currently, our main flying platforms include 8-rotor Octocopters. More details can be found here https://ps.is.tue.mpg.de/pages/outdoor-aerial-motion-capture-system.

There are currently two ongoing AirCap projects in our group: 3D Motion Capture and Perception-Based Control

Group Leader

PhD Students

- Eric Price (Co-supervised, Main Supervisor: Michael Black; Sep '16 -- present)

- Nitin Saini (Co-supervised, Main Supervisor: Michael Black; Apr '18 -- present)

- Elia Bonetto (Mar '20 -- present, Co-supervisor: Michael Black)

- Yu-Tang Liu (Aug'20 -- present, Co-supervisor: Michael Black)

Master/Bachelor Students

- Halil Acet (Jul '19 -- present, Research Assistant)

- Michael Pabst (Nov '19 -- present, Master Intern)

Previous PhD Students

- Rahul Tallamraju (Co-supervised, Main Supervisor: Professor Kamalakar Karlapalem; Mar '18 -- Aug' 20)

Previous Master and Bachelor Students

- Yilin Ji (Dec '19 -- Jul '20)

- Guilherme Lawless (Sep '16 -- Sep '17, PhD Intern) Currently at The Nano Foundation.

- Roman Ludwig (Sep '17 -- Jul '19, Research Assistant) Currently a PhD student at ETH Zürich.

- Igor Martinović (with thesis, Sep '17 -- Sep '19, Research Assistant and Master Student) Currently at Vector Informatik GmbH.

- Nowfal Manakkaparambil Ali (Jul '19 -- Sep '19, Research Assistant) Currently at Fraunhofer Institute.

- Ivy Nuo Chen (Aug '19 -- Oct '19, Bachelor Intern) Currently at Temple University, USA.

- Eugen Ruff (Research Engineer, Currently at Bosch)

- Soumyadeep Mukherjee (Bachelor Intern, Currently at udaan.com)

- Raman Butta (Bachelor Intern, Currently at Indian Oil)

- David Sanz (PhD Intern)

Source Code and Resources

News : Nodes and packages specific to our IEEE RA-L + IROS 2020 paper (published), IEEE RA-L 2019 (published) and ICCV 2019 (published) papers can be found on our Github organization page.

Robot Perception Group Github Organization Page

List of required hardware

- Flying platform capable of carrying 2 kg of payload or more

- OpenPilot Revolution flight controllers

- HD Cameras

- NVIDIA Jetson TX1 embedded GPU

- On board computer (PC) - Intel Core I7 CPU - Ubuntu 16.04

List of required 3rd party Open Source Packages

Project Source Code

- LibrePilot modified flight controller firmware and ROS interface

Based on LibrePilot - SSD Multibox detection server

Based on SSD Multibox - AirCAP Main Public Code Repository

- Rotors Gazebo Simulation Environment specific to AirCap project

Based on Rotors Simulator

1. Markerless Outdoor Human Motion Capture Using Multiple Autonomous Micro Aerial Vehicles. [ICCV 2019 (Published)] ROS-based open source code is provided. See the Resources tab of this project page.

2. Active Perception-driven Formation Control of multiple MAVs. [IEEE RA-L 2019 (Published)] ROS-based open source code is provided. See the Resources tab of this project page.

3. Cooperative Detection and Tracking using DNNs on Multiple MAVs. [IEEE RA-L + IROS 2018 (published)] See Publications tab of this project page.

4. Multi-UAV Trajectory Planning and Collision Avoidance. [SSRR 2018 (published)] See Publications tab of this project page.