A perception-driven formation of 3 self-designed Octocopters tracking the person walking on a sloped terrain.

Goal

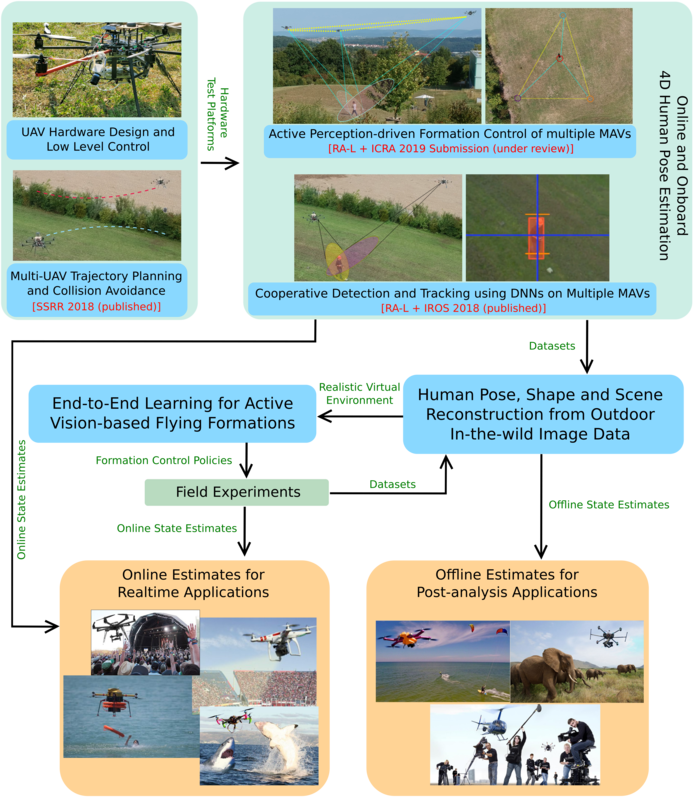

The goal of the project 'AeRial outdoor motion CAPture' (AirCap) is to perform on-board, online and real-time full body pose and offline shape estimation of humans and animals in outdoor scenarios, using a team of micro aerial vehicles (MAVs) with only on-board cameras. It has been partially funded by the Max Planck Grassroots Project MAVOCAP.

Motivation

Human and animal full-body motion capture (MoCap) in outdoor scenarios is a challenging and largely unsolved problem. MoCap systems like Vicon, Optitrack and the 4D Dynamic Body Scanner at MPI-IS Tuebingen, achieve high degree of accuracy in indoor settings. Besides being bulky, they make use of reflected infrared light and heavily rely on precisely calibrated wall or ceiling-mounted fixed cameras. Consequently, such systems cannot be used to perform MoCap in outdoor scenarios where changing ambient light conditions persist and permanent fixtures in the environment cannot be made. Our outdoor MoCap solution involves flying robots with only on-board equipment, e.g., cameras, Intel i7 CPUs, NVidia Jetson TX1 GPU modules.

Overview of AirCap's Research Threads (See 'Research Threads' tab for more details.)

1. Active Perception-driven Formation Control of multiple MAVs.

- ICRA 2019 Submission (under review)

- ROS-based open source code is provided. See the Resources tab of this project page.

2. Cooperative Detection and Tracking using DNNs on Multiple MAVs.

3. Multi-UAV Trajectory Planning and Collision Avoidance.

Below we describe the most important and ongoing research threads within this project.

1. Active Perception-driven Formation Control of multiple MAVs. [ICRA 2019 Submission (under review)] ROS-based open source code is provided.

The goal in this research thread is to enable a team of MAVs that can actively perceive and track a moving person in outdoor environments in presence of static and dynamic obstacles. To this end, we have develop a novel decentralized convex model predictive controller for active cooperative perception. The crux of our method lies in decoupling the goal of active tracking as a convex quadratic objective and linear constraints. We do so by identifying inter-robot spatial dependencies that not only avoid inter-robot collisions but also minimize the joint target uncertainty. Specifically, we show how these dependencies enforce an angular configuration among the MAVs to achieve the desired objective while keeping the MPC formulation convex. Multiple real robot experiments involving 3 MAVs are presented along with comparisons to the state of the art approaches to showcase the efficiency of our method. Moreover, extensive simulation results demonstrate its scalability and robustness.

2. Cooperative Detection and Tracking using DNNs on Multiple MAVs. [RA-L + IROS 2018 (published)] See Publications tab of this project page.

The goal in this research thread is to enable multi-camera tracking of humans and animals in outdoor environments. This is a highly relevant and challenging problem. Our approach to it involves a team of cooperating micro aerial vehicles (MAVs) with on-board cameras only. Deep neural networks (DNNs) often fail at detecting small-scale objects or those that are far away from the camera, which are typical characteristics of a scenario with aerial robots. Thus, here we address the core problem of how to achieve on-board, online, continuous and accurate vision-based detections using DNNs for visual person tracking through MAVs. Our solution leverages cooperation among multiple MAVs and active selection of most informative regions of image. We demonstrate the efficiency of our approach through simulations with up to 16 robots and real robot experiments involving 2 aerial robots tracking a person, while maintaining an active perception-driven formation. ROS-based source code is provided for the benefit of the community. Please see the Resources tab of this project page.

3. Multi-UAV Trajectory Planning and Collision Avoidance. [SSRR 2018 (published)] See Publications tab of this project page.

In this research thread, we consider the problem of obstacle avoidance in the context of decentralized multi-robot formation control. We have developed a method in which each robot executes a local motion planning algorithm which is based on model predictive control (MPC). The planner is designed as a quadratic program, subject to constraints on robot dynamics and obstacle avoidance. Repulsive potential field functions are employed to avoid obstacles. The novelty of our approach lies in embedding these non-linear potential field functions as constraints within a convex optimization framework. Our method convexifies non-convex constraints and dependencies, by replacing them as external input forces in robot dynamics. The proposed algorithm additionally incorporates different methods to avoid field local minima problems associated with using potential field functions in planning. The motion planner does not enforce predefined trajectories or any formation geometry on the robots and is a comprehensive solution for cooperative obstacle avoidance in the context of multi-robot formation flying. In this work have performed only simulation studies in different environmental scenarios to showcase the convergence and efficacy of the proposed algorithm. This work also motivates the development of an active perception-driven formation controller (see Research Thread 1).

Source Code and Resources

News : Nodes and packages specific to our submission to RA-L + ICRA 2019 added on our Github project page.

Github project page with all sources: AirCap Github Profile

List of required hardware

- Flying platform capable of carrying 2 kg of payload or more

- OpenPilot Revolution flight controllers

- HD Cameras

- NVIDIA Jetson TX1 embedded GPU

- On board computer (PC) - Intel Core I7 CPU - Ubuntu 16.04

List of required 3rd party Open Source Packages

Project Source Code

- LibrePilot modified flight controller firmware and ROS interface

Based on LibrePilot - SSD Multibox detection server

Based on SSD Multibox - AirCAP Main Public Code Repository

- Rotors Gazebo Simulation Environment specific to AirCap project

Based on Rotors Simulator

1. Active Perception-driven Formation Control of multiple MAVs. [RA-L + ICRA 2019 Submission (under review)] ROS-based open source code is provided. See the Resources tab of this project page.

2. Cooperative Detection and Tracking using DNNs on Multiple MAVs. [RA-L + IROS 2018 (published)] See Publications tab of this project page.

3. Multi-UAV Trajectory Planning and Collision Avoidance. [SSRR 2018 (published)] See Publications tab of this project page.